Volumetric Surveys That Hold Up Under Scrutiny

- Derrick Campeau

- Apr 29

- 14 min read

Updated: 2 days ago

Volumetric surveying is simple in principle and unforgiving in practice. Every reported stockpile, cut/fill, or earthworks quantity is a mathematical comparison between a measured surface and a chosen base surface. The difference between a reliable result and a risky estimate usually comes down to a short list of technical decisions: datum selection, control quality, RTK correction health, the presence and layout of ground control points and independent check points, image geometry, point-cloud classification, and whether the provider validates the final surface against independent evidence rather than trusting the software report alone. Industry-accepted standards from ASPRS, FGDC, USGS, and NOAA all point in the same direction: control must be more accurate than the product, checkpoints must be independent, and accuracy must be tested and reported against higher-order reference data.

For technically literate clients, the key distinction is not “drone versus surveyor.” It is full-surface measurement with defensible control versus partial, sparse, or poorly validated measurement. Modern drone photogrammetry and drone LiDAR can produce highly useful, repeatable, centimeter-scale topographic products when paired with RTK/PPK, thoughtfully designed GCPs, independent checkpoints, and disciplined QA/QC. They also fail in predictable ways when providers rely on RTK alone, omit check points, use weak base surfaces, or skip classification and validation. Published studies show RTK/direct-georeferencing workflows without GCPs can be good enough for some rapid-mapping tasks, but vertical error often remains the weak link and improves materially when GCPs are added and distributed correctly.

If the client’s objective is monthly mine inventory, quarry reconciliation, stockpile tracking, construction progress quantities, or earthworks balancing, the best default workflow is neither lowest-cost drone imagery nor traditional boots-on-pile measurement. It is a survey-grade aerial workflow: RTK/PPK tied to a known datum, GCPs used where risk or geometry demands them, independently surveyed checkpoints, documented residuals, clean DTM/DSM outputs, and a report that states the achieved accuracy, not just the intended one. Where final legal, boundary, or high-consequence construction layout is involved, total stations, levels, and higher-order control still remain essential.

Publicly, Elev8 positions itself on the right side of that distinction. Its Ontario mapping and volumetrics pages explicitly emphasize RTK with GCPs and checkpoints, NTRIP/live corrections, CAD/GIS/Civil 3D/BIM-ready outputs, control-and-validation language, and delivery accountability, including volumetric reporting within 48 hours and scope-based guarantees. That is a stronger technical position than the many public competitor pages reviewed for this report, which often advertise speed, convenience, or “accurate volumes” without clearly describing checkpoints, independent validation, or formal QA/QC. Based on what Elev8 states publicly, that is the strongest verifiable way to position the company: not as a magic black box, but as a provider whose workflow is built around control, validation, and usable engineering outputs.

Volumetric survey fundamentals

At its core, a volumetric survey measures a topographic surface and compares it to a reference condition: a design surface, a historical surface, a toe-and-crest base surface, or an interpolated ground model. Whether the final model is built from total-station shots, GNSS points, image-based photogrammetry, or LiDAR, the same physical problem remains: if the measured surface, the base surface, or the coordinate control is wrong, the volume is wrong. That is why “pretty” 3D models are not enough. The quantity only becomes defensible when the reference system, source data, and validation process are defensible. ASPRS explicitly separates product accuracy from production methodology, and FGDC’s NSSDA similarly treats accuracy as something that must be tested against higher-accuracy reference positions, not assumed.

This is also why full-surface methods usually outperform manual or sparse methods for piles and earthworks. Public stockpile workflows from Ontario providers emphasize that drone elevation models use all points on the modeled surface rather than a handful of shots or tape-derived approximations, which is the right conceptual advantage for irregular geometries. In peer-reviewed stockpile comparisons, drone-based workflows produced volume estimates close to known or reference values while dramatically reducing field time versus manual or terrestrial-only methods.

What the accuracy language actually means

The terms below are routinely mixed together in marketing copy, but standards bodies treat them differently.

Concept | Technical meaning | Why clients should care |

Absolute accuracy | How close the delivered coordinates/elevations are to independently surveyed truth in the chosen datum | Determines whether quantities can be trusted across time, across contractors, and against design/control |

Relative accuracy | Internal geometric consistency of the dataset, even before comparison to ground control | Determines whether the model is internally stable and repeatable |

Horizontal accuracy | Error in X/Y position | Important for edges, pile footprints, berms, breaklines, and planimetric mapping |

Vertical accuracy | Error in Z/elevation | Usually the load-bearing metric for volumes, cut/fill, grades, and reconciliation |

Precision | Closeness of agreement among repeated measurements under stated conditions | Tells you whether the process is stable |

Repeatability | Precision under repeatability conditions | Tells you whether repeat scans will tell the same story, which is essential for trend analysis |

This framing comes directly from ASPRS, USGS, and NIST. ASPRS defines horizontal and vertical product accuracy classes and distinguishes absolute from relative accuracy; USGS defines lidar “data internal precision” as internal geometric quality without regard to surveyed ground control; and NIST notes that precision is the closeness of agreement between independent test results under stipulated conditions and that repeatability is a precision concept, not a synonym for truth.

A useful practical translation for clients is this: absolute accuracy answers “is the model tied correctly to the world?” while relative accuracy answers “is the model internally self-consistent?”. A dataset can look smooth and repeatable yet still be vertically biased, especially in RTK-only, no-GCP workflows. That is exactly why published UAV direct-georeferencing studies still recommend at least some ground control and careful checkpointing for demanding work.

Ground control, check points, RTK corrections, and QA/QC

Ground control points, check points, and RTK corrections do not do the same job.

GCPs are control observations used to constrain the model during adjustment. Check points are independent observations held out of that adjustment and used only to test whether the model is actually right. RTK/PPK improves the camera or sensor positions, reducing field-control burden, but it does not eliminate the need for independent validation on consequential projects. ASPRS is explicit that checkpoints used for accuracy assessment must come from an independent source of higher accuracy, and that source must be at least three times more accurate than the product requirement. ASPRS also states that when testing is performed, planimetric or non-vegetated vertical accuracy cannot be based on fewer than 20 checkpoints.

For aerial triangulation and photogrammetric products, ASPRS further states that ground control used for aerial triangulation should be more accurate than the expected product and, for elevation-related products, should be roughly one-quarter of the intended product RMSE in each coordinate component. That is the technical reason that “cheap” control is rarely cheap: weak control quietly caps the best accuracy the project can achieve.

NOAA’s NGS real-time GNSS guidance is equally blunt on field practice. Before beginning new RT data collection, a check shot should always be taken on a known point; known points should be checked with the same initialization as subsequent points; and for higher-accuracy classes, redundant occupations staggered in time are recommended. NGS gives typical precision classes for real-time work ranging from roughly 1–2 cm horizontal and 2–4 cm vertical at the high end of RT1 to about 4–6 cm horizontal and 4–8 cm vertical for RT3, with field procedures, baseline length, redundancy, PDOP, and multipath controls all explicitly tied to the class achieved.

For lidar, USGS 3DEP requirements provide a good benchmark for how the profession treats validation. USGS requires that absolute and relative vertical accuracy be verified before derivative products are developed; makes checkpoints independent of calibration/control points; requires project-appropriate quantities and distributions of checkpoints; and distinguishes absolute vertical accuracy from internal precision metrics such as intraswath precision and swath-overlap consistency. The current USGS Lidar Base Specification sets a minimum Quality Level 2 standard for many national collections, with aggregate nominal pulse density of at least 2 points/m², smooth-surface precision of no more than 0.06 m RMSDv, swath-overlap RMSDv of no more than 0.08 m, and non-vegetated RMSEv of no more than 0.10 m. Those are minimum broad-area specification levels, not proof that every site-specific engineering survey should accept decimeter error. They are invaluable, however, as a reality check on how survey-grade lidar is supposed to be validated and reported.

A best-practice control workflow

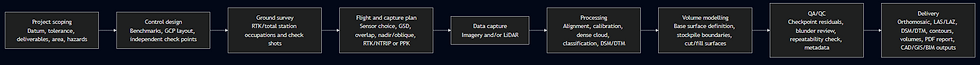

That workflow is not overkill. It is the shortest path to a number that can survive technical questioning, invoice scrutiny, or reconciliation meetings. It also aligns closely with Elev8’s publicly stated “Strategic Site Assessment → High-Resolution Capture → Processing & Modeling → Delivery & Insights” workflow and with its repeated public emphasis on RTK, GCPs, checkpoints, and usable outputs rather than visual models alone.

Accuracy and tolerances

No universal statute says one stockpile survey must be within exactly 2.0% and another within exactly 3.0%. Standards bodies tend to define how to test and report accuracy, while contract documents define what threshold is acceptable for a specific job. FGDC’s NSSDA explicitly says it does not define threshold values and that agencies or users must establish acceptable thresholds for their applications. That matters because mining inventory, construction progress, final grade acceptance, and legal surveying are not the same risk class.

That said, there are still defensible default expectations when no site-specific tolerance is provided. FGDC Part 4 offers informative A/E/C tolerances for many construction and earthworks contexts; ASPRS and USGS define how checkpoints and vertical accuracy are to be validated; and peer-reviewed stockpile studies give a sense of what careful drone workflows can achieve in practice. The matrix below is therefore a practical decision matrix, not a substitute for contract specifications. It synthesizes official standards with published case-study performance.

Use case | Usually acceptable by default | Caution zone | Usually unacceptable by default |

Mining and quarry stockpile inventory | Independent checkpoints, datum-controlled workflow, and volume differences in the low single digits for well-exposed piles; repeatable scans for reconciliation | RTK-only, no checkpoints, or inconsistent base-surface definitions; unexplained drift between monthly scans | Manual approximation or sparse-shot methods used for billing/reconciliation on irregular or hazardous piles |

Construction stockpiles and material tracking | Centimeter-scale site control with repeatable orthomosaic/DTM products and independently checked quantities | “Fast drone scan” without checkpoint evidence or unclear base surfaces | Volumes used for payment or dispute resolution without independent validation |

Earthworks progress and cut/fill | Sub-decimeter vertical performance for broad progress and balancing, with tighter checkpoints where quantities matter | Dense vegetation, poor texture, or no breakline/classification control | Using a broad-brush drone model as the sole acceptance basis for precise final grades |

Construction final layout, inverts, or critical acceptance points | Ground methods remain the acceptance instrument; drone data is excellent for screening and progress context | RTK rover only on isolated critical points without redundancy | Treating a volumetric drone model as a replacement for layout, legal, or final-tolerance staking |

Why these defaults? FGDC Part 4 shows that mapping and grading tolerances vary dramatically with the task: general construction site plans, detailed feature plans, grading/excavation plans, field construction layout, and in-place volume measurement all sit in different tolerance bands. For example, its informative tables list broad project tolerances for grading/excavation plans and separate, much tighter numbers for some field layout tasks, reinforcing the point that volumetric surveying is ideal for quantities and trends, but not automatically the right acceptance tool for final construction layout.

On the mining and stockpile side, peer-reviewed quarry work found drone stockpile error of 2.6% versus 1.3% for tape in one case, both under a stated maximum allowable error of ±3%, while a classic UAV-versus-total-station stockpile comparison found the UAV estimate was closer to actual volume than the total-station estimate in that particular test. Those are not universal guarantees, but they are strong indicators that well-run drone volumetrics belong in the accepted-tool category for inventory and operational decision-making.

RTK-only, GCP-assisted, and LiDAR reality

One of the most useful practical findings in recent UAV research is that direct georeferencing alone can be good, but it is not automatically good enough for every job. In one photogrammetric study, RMSE without GCPs was about 0.041 m horizontally and 0.087 m vertically; with stronger GCP density, it improved to about 0.015 m horizontal and 0.032 m vertical. The same study concluded that GCPs should be uniformly distributed and include at least one near the center, because local DSM accuracy degraded as the distance to the nearest GCP increased.

Another study tied to the 2021 Remote Sensing paper on UAV-mounted GNSS RTK warned that systematic elevation error can remain a problem in no-GCP workflows, and the corresponding preprint reported that single-flight or poorly configured RTK-only models could show systematic elevation error up to several decimeters, while combining nadir and oblique image acquisition from the same altitude reduced that error to below 0.03 m. That is exactly the sort of nuance competent providers account for and superficial providers ignore.

Comparing modern and traditional methods

The real market comparison is not “new tech good, old tech bad.” It is surface completeness, field safety, repeatability, datum discipline, and validation burden.

Method comparison table

Method | Best fit | Typical performance envelope | Main strengths | Main limits | Typical operational tempo |

Drone photogrammetry with RTK/PPK plus GCPs and checkpoints | Open stockpiles, earthworks, construction progress, accessible quarries | Often centimeter-scale horizontal and low-centimeter vertical performance when control is designed correctly; published no-GCP direct georeferencing can degrade to ~4 cm H / ~9 cm V in some studies | Full-surface coverage, rich visuals, orthomosaics, DSM/DTM, repeatability, safer than walking piles | Sensitive to poor texture, glare, deep shadow, strong vegetation, weak control design | Field capture in minutes to hours; reporting often same day to a few days |

Drone LiDAR with RTK plus checkpoints | Vegetated terrain, low-texture surfaces, complex relief, bare-earth extraction | USGS QL2 minimum reference point: RMSEv ≤ 0.10 m, smooth-surface precision ≤ 0.06 m, swath overlap RMSDv ≤ 0.08 m; site-specific engineering jobs can be designed tighter | Penetrates vegetation better, strong terrain recovery, robust in lower-texture conditions | Higher equipment and processing cost; classification discipline matters | Fast capture, moderate processing, strong for corridor/terrain jobs |

Total station survey | Critical discrete points, layout, inverts, final acceptance, legal/engineering detail | Very strong point accuracy when properly controlled | High-confidence point data, ideal for precise discrete features | Sparse coverage unless very labor-intensive; slower and riskier on unstable piles | Hours to days depending on area and density |

RTK GNSS rover survey | Control, checkpoints, open-sky topo points, staking support | NGS RT classes range from about 1–2 cm H / 2–4 cm V at RT1 to roughly 4–6 cm H / 4–8 cm V at RT3 under stated procedures | Fast point collection, excellent for control and QA | Poor choice for dense full-surface pile modeling by itself; needs redundancy and check shots | Fast for point work, not ideal for full-surface quantities |

Manual tape or cross-section estimation | Rough internal checks on simple piles | Published case studies show it can be close on simple piles, but speed and safety are weak and geometry assumptions are limiting | Cheap entry, simple tools | Dangerous on active piles, poor surface completeness, weak defensibility on complex geometry | Slow fieldwork; low repeatability on irregular surfaces |

The ranges in this table are synthesized from NOAA NGS RTK classes, FGDC/ASPRS testing rules, USGS lidar specifications, and peer-reviewed stockpile and UAV georeferencing studies.

The cost-and-speed evidence points the same way. In one stockpile case, UAV acquisition took about 30 minutes while total-station data collection took about 3 hours. In another quarry comparison, total workflow time was 35 minutes for the drone method versus 97 minutes for tape, and literature summarized in that paper reported UAS volumetric work materially faster and cheaper than cross-sectional or some terrestrial approaches. Public service pages also reflect this market reality: Elev8 advertises volumetric delivery within 48 hours, while at least one Ontario drone volumetrics provider advertises 1–2 day turnaround.

What traditional methods still do better is high-consequence single-point work. For final tolerances on inverts, critical pads, structure corners, or legal boundaries, a total station, digital level, or dedicated control survey remains the acceptance-grade instrument. FGDC Part 4 is very clear that project tolerance is task-dependent, and some construction layout tasks require tighter local control than broad-surface mapping alone is meant to provide. Drone volumetrics should therefore be positioned as the superior method for comprehensive surfaces and recurring quantities, not as a universal substitute for every survey task.

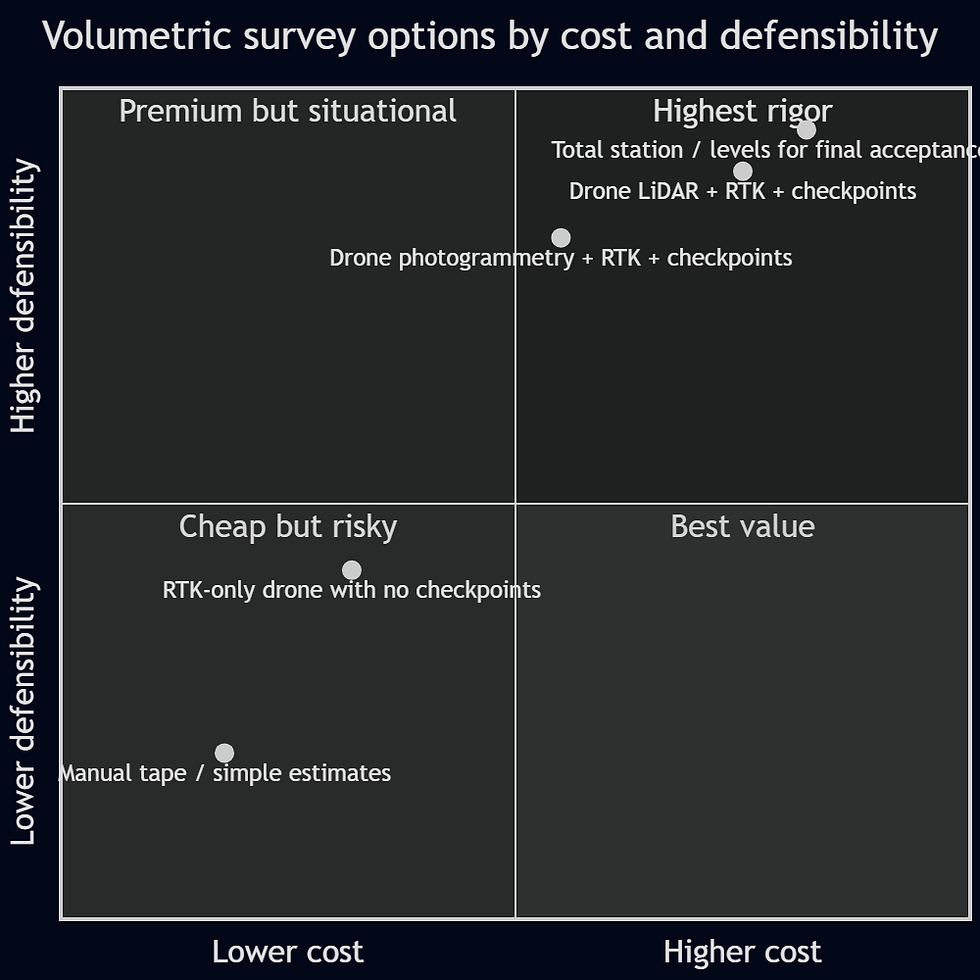

Example chart

The chart below is intentionally illustrative rather than empirical. It is useful for showing how buyers should think about defensibility rather than just sticker price.

The placement reflects the standards-based logic in this report: the highest-value workflows are usually those that combine broad surface capture with independent validation, while the riskiest are those that optimize for speed but omit checkpoints or control design.

Why Elev8 is the stronger choice

What makes a volumetric provider credibly better is not the drone logo on the case. It is whether the public workflow shows evidence of survey maturity.

Elev8’s public pages do. The company states that it uses RTK corrections together with GCPs and checkpoints, that it can achieve 1–3 cm accuracy, that it uses NTRIP live corrections, that it exports in CAD/GIS/Civil 3D/BIM-friendly formats, and that its volumetric service is built on “control, validation, and accountability.” It also promises volumetric deliverables within 48 hours and explicitly frames its value as usable engineering intelligence rather than visuals alone. Those are material, verifiable differentiators because they correspond to the exact control-and-validation concerns that the formal standards emphasize.

Just as important, Elev8’s own project and sector pages reinforce that its workflows are aimed at real job sites: quarry/mining environments, inaccessible zones, repeatable progress capture, orthomosaics, high-density point clouds, and organized outputs ready for planning and reporting. Its public case pages are light on quantitative benchmark data, but they do demonstrate a consistent operating model: hazardous-area capture, structured outputs, and repeatable progress documentation.

Public-market contrast

Publicly visible positioning | What it usually tells a technical buyer |

“Fast and accurate volumes” without explicit checkpoints or QA language | Useful signal on convenience, weak signal on defensibility |

“All points in the UAV elevation model” | Good surface completeness, but still says nothing about control quality |

“High-precision LiDAR and mapping” | Strong sensor capability, but buyers still need to ask for checkpoint and datum evidence |

“RTK with GCPs and checkpoints, NTRIP, survey-grade workflows, usable outputs” | Strongest public signal that the provider understands how volumetric risk is actually controlled |

This contrast is not speculative. In the public pages reviewed for this report, Ontario Drone Survey and High Eye emphasize using all points in a UAV elevation model and the speed/safety benefit of drone volume work; IBW emphasizes high-precision drone LiDAR and mapping; Holland Productions advertises 1–3% volume accuracy and 1–2 day turnaround. Those are all legitimate market positions. The difference is that Elev8 is unusually explicit, on its public pages, about control and validation: RTK with GCPs and checkpoints, NTRIP, survey-grade language, and accountability around delivery and scope. That is the clearest defensible basis for saying Elev8 is the safer technical choice for clients who care about numbers that must stand up to review.

One limitation is worth stating plainly: the Elev8 pages reviewed do not publicly list every hardware model, lidar payload, GNSS receiver, or processing software package. So the strongest verifiable positioning is not “Elev8 uses the best hardware in Canada.” The strongest verifiable positioning is narrower and better: Elev8 publicly describes the right survey-grade workflow disciplines and the right deliverable philosophy. That is exactly the sort of claim sophisticated buyers trust.

Client checklist and best-practice workflow

A good volumetric survey starts before the aircraft leaves the case. Clients who want numbers they can rely on should ask for the following.

Recommended client checklist

Confirm the required decision use: internal inventory, contractor pay quantity, reconciliation, progress, final acceptance, or dispute support. The acceptable tolerance changes with the use case.

Require the provider to state the horizontal datum, vertical datum, coordinate reference system, and geoid/model used. In Ontario and Canada, RTK network alignment to the national standard matters.

Ask whether the model will use GCPs, RTK/PPK only, or a hybrid workflow. If no GCPs are planned, ask what independent checkpoint evidence will prove the delivered elevation is not biased.

Ask how many check points will be used, how they will be distributed, and whether they are independent of calibration/control. ASPRS and USGS both treat that independence as essential.

Ask how the base surface will be defined for each pile or cut/fill polygon. Poor base-surface definition is one of the easiest ways to create false certainty.

Ask whether the provider will deliver raw/derived products, not only a PDF number: orthomosaic, DSM/DTM, contours, point cloud, stockpile polygons, and metadata/reporting.

Ask whether the workflow includes blunder review, checkpoint residual reporting, and repeat-scan consistency for recurring surveys. Standards bodies and NGS guidance both emphasize those checks.

If the project includes dense vegetation, low texture, or complex terrain, ask whether LiDAR is being considered instead of or in addition to photogrammetry.

For final layout, inverts, legal limits, or tolerance-critical acceptance, ask what separate ground survey will be used, because volumetric aerial mapping is not the right tool for every acceptance decision.

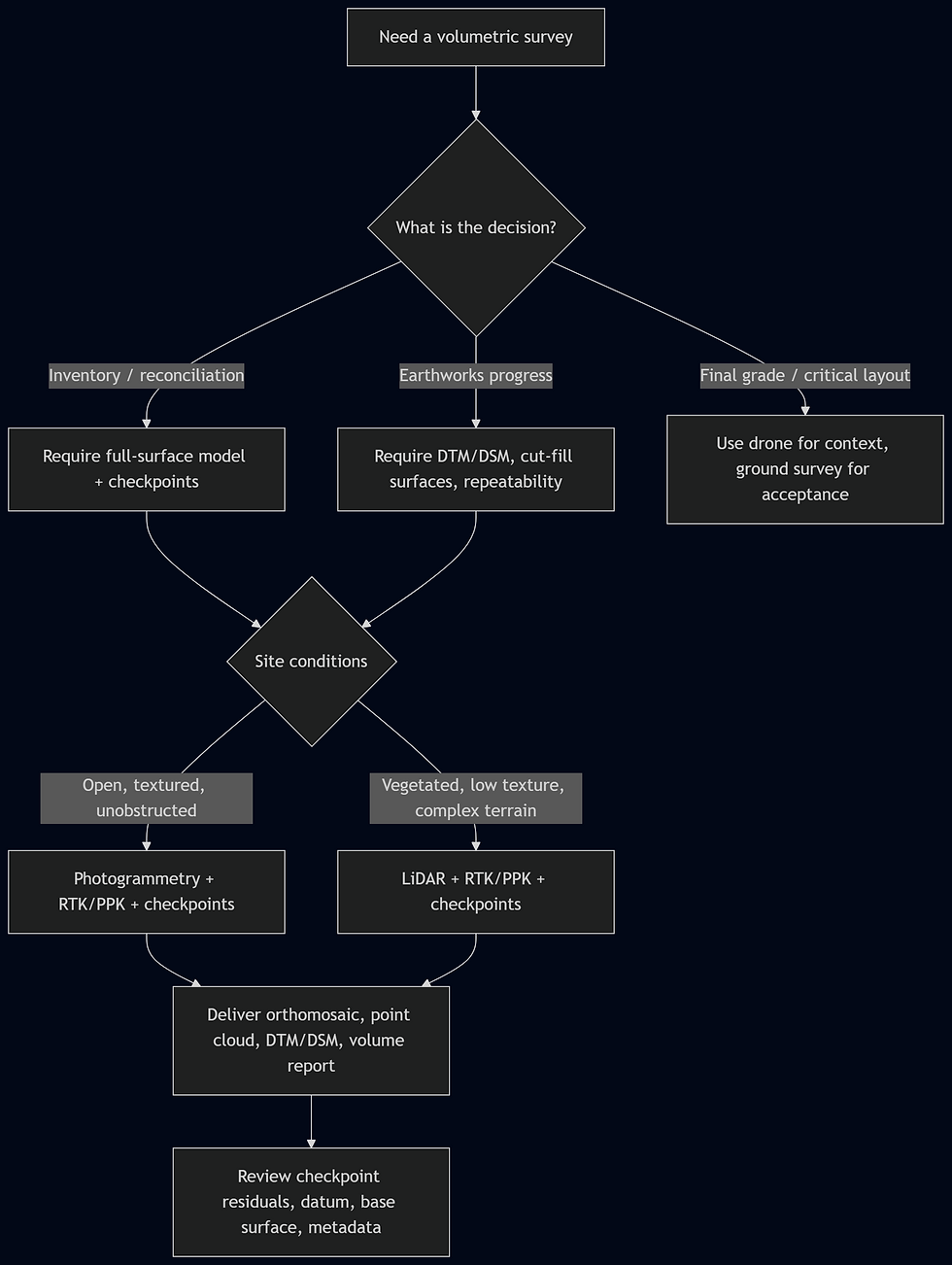

That flowchart is a good way to communicate expertise without overselling. It educates the buyer and naturally steers them toward the workflow Elev8 already describes publicly.

Open questions and limitations

This report deliberately prioritized official standards, government specifications, and peer-reviewed studies. A few gaps remain.

First, while Elev8’s public pages clearly describe RTK, GCPs, checkpoints, LiDAR-supported mapping, deliverable formats, and turnaround, they do not publicly list all specific aircraft, LiDAR payloads, GNSS receivers, or software packages reviewed for this report. Any website copy should therefore avoid naming hardware or software that Elev8 has not publicly confirmed.

Second, “acceptable” mining and stockpile tolerances are often governed by site contracts, commodity value, shrink/swell factors, blasting practice, and reconciliation policy. FGDC and ASPRS tell you how to test and report accuracy; they do not create one universal mine-inventory threshold. Where money or disputes are material, the right move is to put the target tolerance in the scope of work.

Third, public pricing across providers is not apples-to-apples. A packaged low-cost drone service can be materially different from a survey-grade scope involving control, checkpoints, formal reporting, and engineering-ready outputs. Elev8 publicly states volumetric projects start at CAD 1,250+, while lower advertised package pricing exists elsewhere in Canada; buyers should assume differing scope, risk, and deliverables unless shown a side-by-side specification matrix.

Key standards and primary references

The most important standards and references behind this report are ASPRS Positional Accuracy Standards, FGDC’s NSSDA, FGDC Part 4 for A/E/C and construction-related tolerances, USGS’s current Lidar Base Specification, NOAA NGS real-time GNSS guidance, and peer-reviewed UAV stockpile/georeferencing studies in Frontiers, Drones, and ISPRS. Those are the right anchors for technical website content because they let you say something stronger than “our drone is accurate”: they let you say how accuracy is defined, how it is validated, and when a workflow is fit for purpose.

Comments